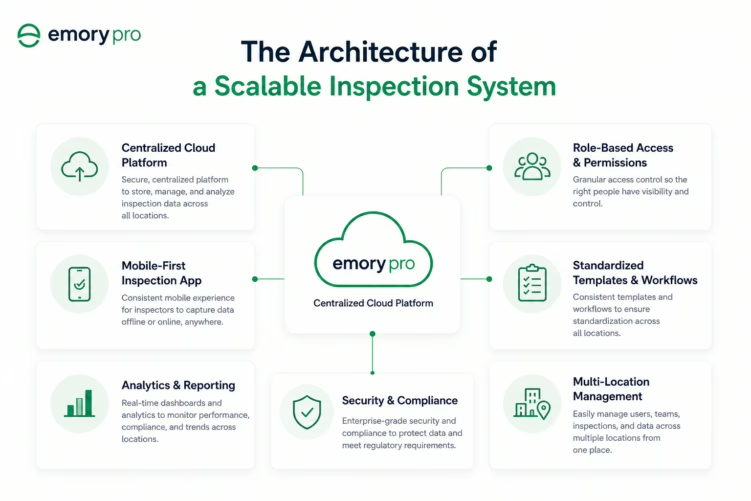

Centralised Template Management

All inspection templates are managed from a central administration interface. The central team can publish new templates or update existing ones, with changes taking effect across all sites simultaneously. Sites can access additional location-specific templates, but the central templates cannot be modified at site level.

This architecture ensures that standard templates remain standard as the organisation grows. It also enables rapid deployment of new inspection requirements across all sites, when a regulatory change requires a new inspection item, it is added once and deployed everywhere, not cascaded manually across fifty site administrators.

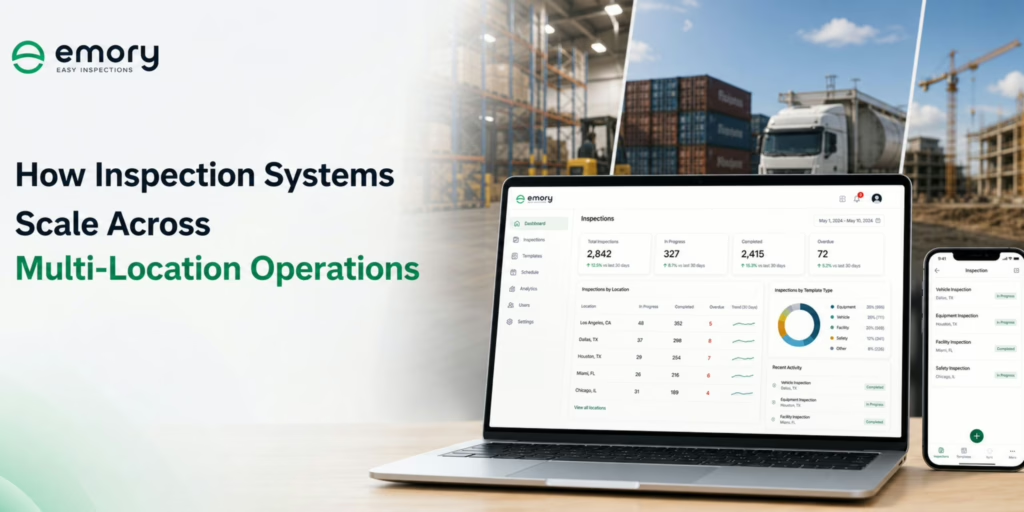

Centralised Data Storage with Site-Level Access Controls

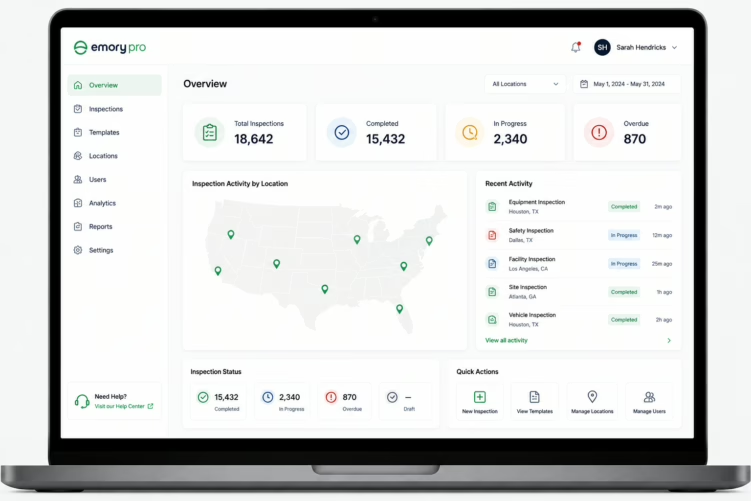

All inspection data is stored in a single centralised database. Site-level managers have access to their own site’s data. Regional managers have access to the sites in their region. Central operations and compliance teams have access to all sites.

This access control structure ensures that site-level data is not siloed into site-level systems, which would make aggregate reporting impossible, while ensuring that individual sites cannot access other sites’ operational data.

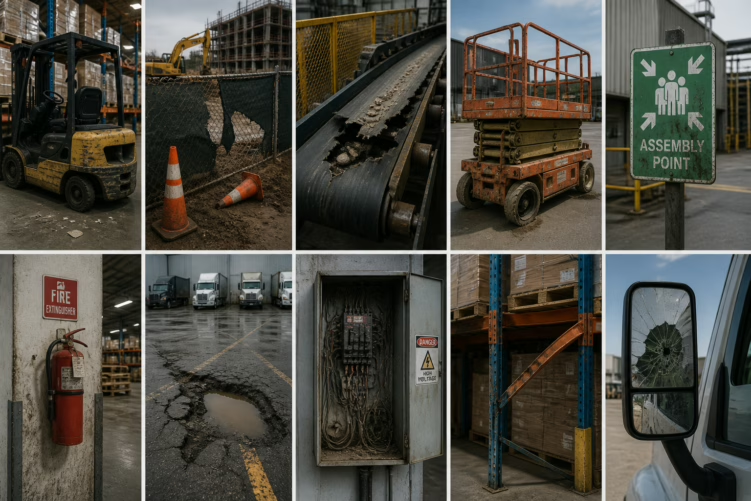

Automated Finding Routing with Escalation

Findings are routed automatically based on configurable rules that can account for finding type, severity, asset category, and site. High-severity findings can be routed simultaneously to site-level and regional reviewers. Findings that are not reviewed within defined timeframes are escalated automatically to the next level.

This routing architecture ensures that finding management is consistent across all sites, it does not depend on site-specific processes or individual site managers’ email habits.

Aggregate Reporting with Drill-Down Capability

The system produces aggregate reports across any combination of sites, time periods, and data dimensions. The cross-trans logistics network, which operates across multiple depot locations using Emory Pro, was able to produce a consolidated inspection performance report for the entire network within minutes, a task that previously required manual consolidation of site-level spreadsheets.

Drill-down capability means that an aggregate report showing a high defect rate in a specific category can be explored to the site, asset, and individual inspection record level without requiring a separate reporting exercise.