The inspection workflows that produce the best outcomes, highest audit pass rates, lowest defect escape rates, fastest resolution times, strongest compliance records, share structural characteristics that distinguish them from average and below-average inspection operations.

These characteristics are not primarily about technology. They are about how the inspection process is designed: who is responsible for what, at what point, with what evidence requirement, reviewed by whom, escalated how, and measured against what standard.

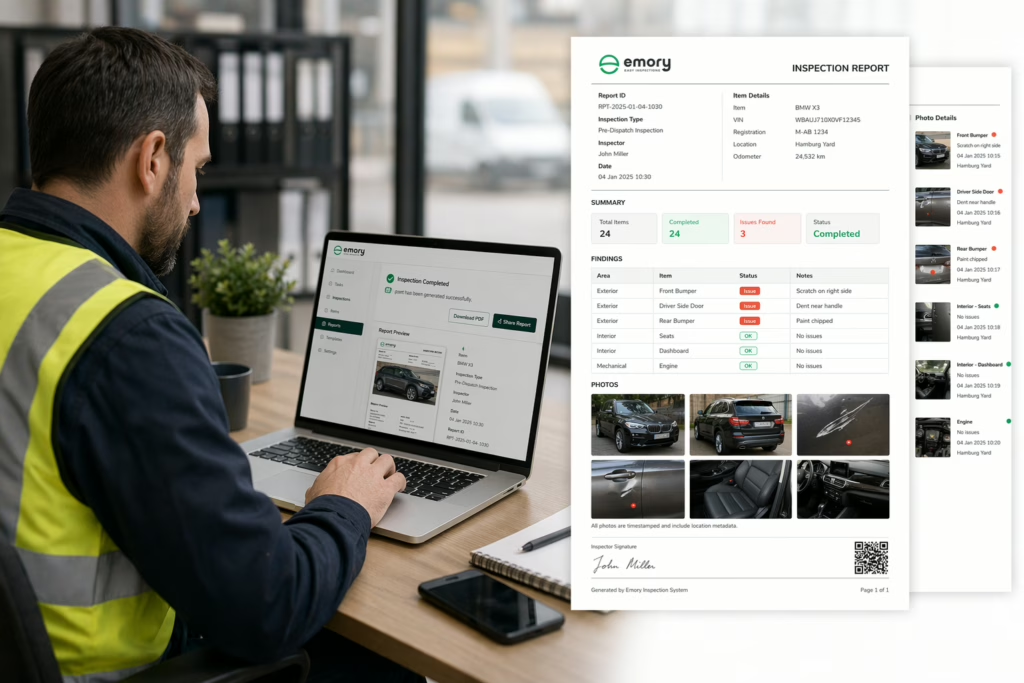

This article identifies the structural characteristics of high-performing inspection workflows, drawing on patterns observed across logistics, manufacturing, and construction operations, including the operational improvements achieved by Emory Pro users Cross-Trans and PortAgent.