Consistent Checklist Enforcement

Human inspectors, under time pressure or fatigue, skip items. They complete sections out of order. They accept ambiguous evidence when clearer evidence is available.

AI-enforced workflows address this by making checklist completion mandatory through a digital inspection checklist, ensuring every step is completed before moving forward.

This is not a dramatic AI application, but it is one of the most impactful. The leading cause of inspection failures in manual systems is not inspector incompetence — it is inspection items that were completed inconsistently or skipped. Consistent enforcement of checklist sequence and completion requirements removes this failure mode.

Automated Report Generation

In manual inspection processes, a significant portion of an inspector’s time is spent not on inspecting but on report writing. Transferring findings from field notes to a formal report, formatting photographs, writing narrative summaries — these tasks can consume as much time as the inspection itself.

Automated report generation eliminates this overhead. When an inspector completes a digital inspection, the report is generated immediately from the captured data: findings are formatted, photographs are embedded with their metadata, and the document is available within seconds of inspection completion.

The inspector’s time is freed for actual inspection.

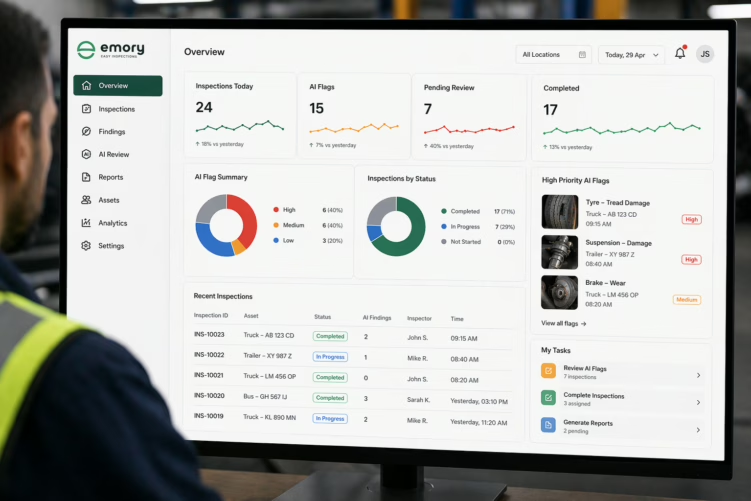

Pattern Detection Across Large Inspection Datasets

One of AI’s most powerful applications in inspection is the analysis of large datasets to identify patterns that would not be visible to an individual inspector.

When thousands of inspection records are analysed, AI can identify which inspection points are most frequently associated with defects, which asset types fail most often at which mileage or age, and which locations produce the most escalations.

This aggregate intelligence enables predictive maintenance scheduling, targeted inspection resource deployment, and process improvements that individual inspection results would not suggest. A single inspector cannot see across 50,000 inspection records. AI can.

Anomaly Detection in Photo Evidence

Computer vision applications in inspection can flag anomalies in photographs: unusual wear patterns, visible damage, liquid contamination, structural irregularities.

In high-volume inspection environments where inspectors review hundreds of similar assets per day, computer vision can serve as a consistent second check — surfacing items that might be missed in a fatigued inspection.

This capability is genuinely useful, particularly in contexts where defects are visually distinctive and consistent — surface corrosion, liquid staining, visible dents. It is less reliable in contexts where defect assessment requires judgment, contextual knowledge, or tactile information that a photograph cannot capture.