Inspection documents that are incomplete, inconsistent, or lacking evidence don’t just fail audits. They also fail the organisations that rely on them for dispute resolution, operational accountability, and performance management. A checklist may have been signed. A report may have been submitted. But on closer inspection, these records often can’t do what inspection documents are meant to do.

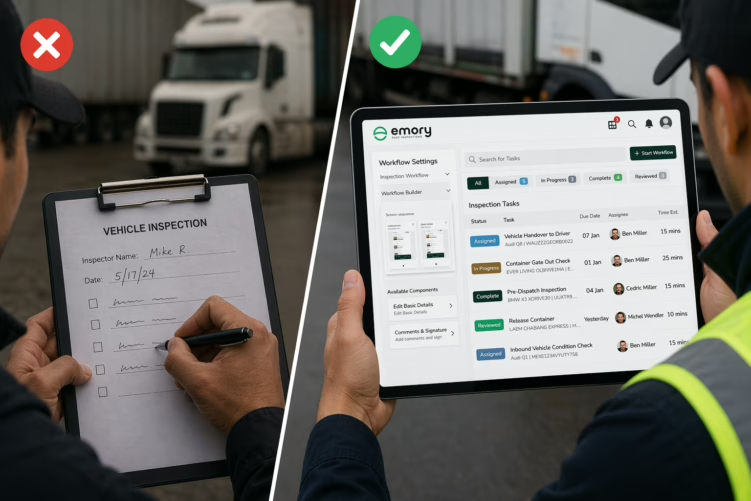

The weaknesses that cause documents to fail are rarely accidental. They’re built into the system — the same problems recurring across industries and organisations of all sizes, because they stem from the limitations of paper-based or partially digitalised inspection processes.

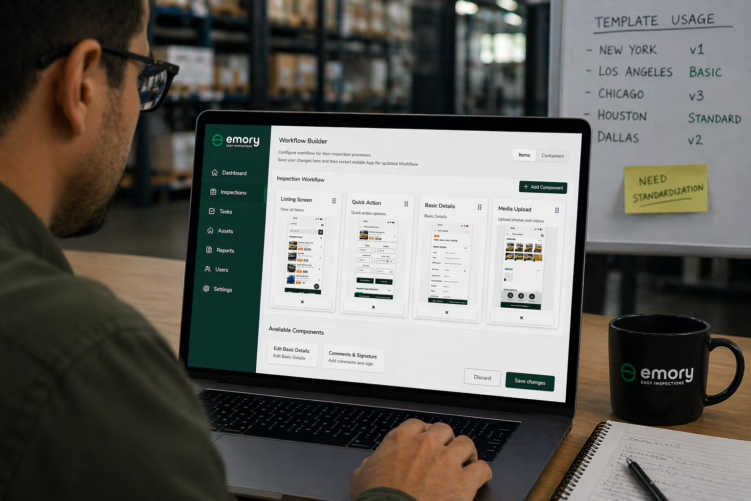

This article examines the most common documentation failures, explains why each matters operationally, and describes how they can be resolved with a purpose-built digital inspection app.